We Harass To Protect Our Group Reputations

Results and analysis from my master's thesis in Psychology

I’ve spent the last 25 years living online and I’ve always wanted to know why we’re all so mean to each other. Now that my thesis Understanding Harassment Inside Online Communities: The Role of Social Identity Threat has been officially published, I wanted to report the results in a more user-friendly format. This is the second post on the topic and you can read Part 1 if you want to know more about the general causes of online harassment and related concepts. Or, you can keep reading if you want to know how I used scientific methods to answer the question Do Social Identity Threats Cause Harassment Inside Online Communities?

The quick summary is that people support online harassment inside groups they belong to when it is used to defend the reputation of those groups. In the common internet situations that I asked users about, an increase in the perception of social identity threat to shared group reputation predicted an increase in support for high-harassment responses inside that group. This effect was significantly stronger than the effect of perceived threats to a group’s practical usefulness or personal emotional state. How do I know this is true?

Survey Research Is Fun and Easy

I know about the effects of social identity threat because I sent Qualtrics surveys to 601 random internet users (paying each $5) using the Prolific research platform and then analyzed the data using linear regressions. Confirming other studies (Peer 2021), Prolific worked well as a psychology research platform and granted me access to a high-quality pool of participants in exchange for a percentage of the participation fee. My only problem with the platform (which I got community help with) was that participant slots quickly filled up so I had to stagger the opening times and countries to get a decent global sample of internet users.

Prolific does not have enough participants registered in India or Africa to be truly representative, but my sample of English speakers included 332 participants from North and South America, 252 from Europe, and 27 from the rest of the world. I used data from 96% of the paid participants, which is a very high rate for internet research. Overall, the demographics were much more representative of the average internet user than you would get from using a convenience sample of college undergraduates or unpaid volunteers.

The process used two separate surveys: In the preliminary survey I asked 400 people to read descriptions of hypothetical conflict inside online groups and respond to some statements using a Likert rating (7 point scale from Strongly Disagree to Strongly Agree). I first asked them to read descriptions of situations and rate how well they understood the description, how upset the situation would make them (the Feeling Upset variable), and how threatening the situation would be to the reputation (Group Reputation Threat) and usefulness (Group Use Threat) of a group. Then, I asked them to read possible responses and rate how well they understood the responses and how abusive and harassing they would be (Response Harassment).

I then analyzed the data to find the most useful situations and responses and used them in the main survey to ask a second group of participants to rate their level of support for reactions taken in response to different situations (the Support for Response variable). Some of the reactions were written to represent a reasonable response and others were written to represent online harassment, and I used participant ratings to categorize some responses as cases of online harassment.

To help this make sense, here is an example question from the main survey where I asked users about their level of support for harassment. In this example (labeled as TeamGame_Insulting_Racist) the survey paired a situation rated for moderate levels of Group Use Threat and Group Reputation Threat with a high-harassment response and I used the ratings to compute the Support for Response:

Did Situation Threats Predict Support for Harassment?

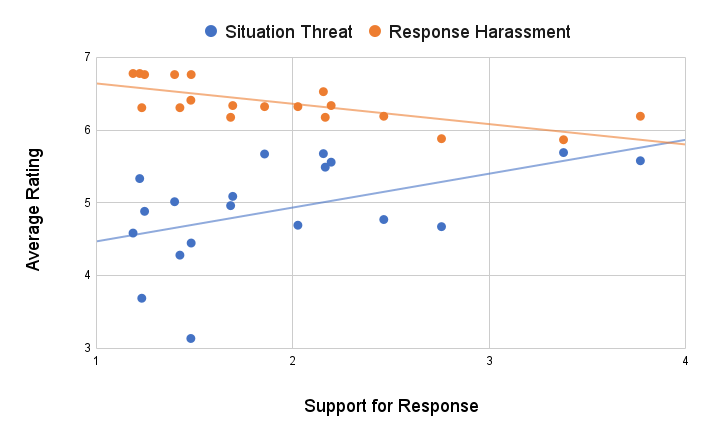

After I collected the data, I used hierarchical/multiple linear regression to work out which variables were most important in predicting support for high-harassment responses like the example above. The proper regression tables start on page 45 of the thesis if you know how to read them, but here is the summary: The most important factor was the degree of Response Harassment, which is good because it means that participants were less likely to support severe harassment in general. Another important factor was the total Situation Threat, calculated by averaging the Feeling Upset, Group Use Threat, and Group Reputation Threat ratings from the preliminary survey. Looking at this chart of high-harassment responses you can see how support for a response goes up when situation threat increases (blue) and goes down when the severity of response harassment increases (orange):

The three situation ratings of Feeling Upset, Group Use Threat and Group Reputation Threat correlated with each other as I expected: a situation that threatens the reputation of a group you belong to will probably also make you feel upset. I ran multiple scenarios of hierarchical regressions to test the importance of each factor, and it turned out that Group Reputation Threat was the most significant threat factor in predicting support for harassment (0.36 unstandardized coefficient at p < 0.001). Coefficients and p-values can be difficult to interpret, but this essentially means that an average increase of 1 point on the Likert scale from “Neither agree or disagree” to “Somewhat agree” across all ratings for the statement “The other user’s actions could hurt the group’s reputation” corresponded with an increase of 0.36 points in the average ratings for the statement “This reaction is an example of harassment.”

The small p-value (Psychology generally uses 0.05 as the highest acceptable p-value) essentially means that there is a less than 0.1% chance that this observed relationship of variables was due to random luck. In other words, if I used a perfect procedure and the resulting data showed a perfectly normal statistical distribution, we would be 99.9% confident that social identity threat to group reputation actually increases support for online harassment. I know that the process and data normality were not perfect, but because of the high baseline confidence it is still very likely that there is a real relationship between group reputation threat and support for harassment.

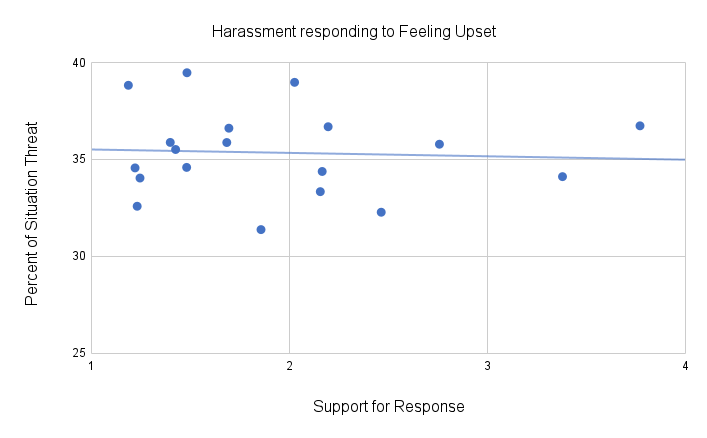

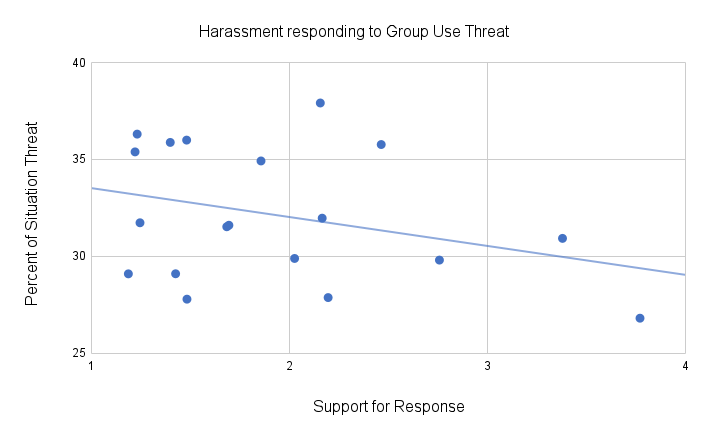

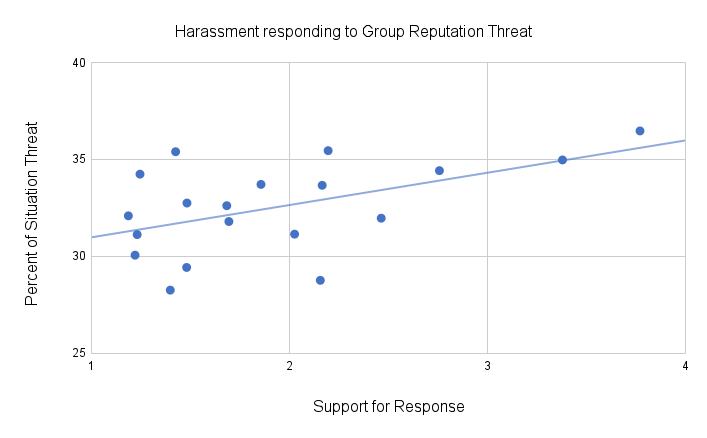

For the other threat variables, the Feeling Upset factor did not provide any significant predictive value and the Group Use Threat factor only slightly lowered the support for harassment (-0.08 coefficient at p < 0.05). This means that these two factors were much less important than Group Reputation Threat. One way to visualize these different relationships is using the three simplified charts below, which compare the percentage of total Situation Threat coming from each threat variable with the level of support for high-harassment responses:

These charts do not represent the actual statistics used to compute the coefficients and p-values listed above but it is difficult to intuitively visualize multivariable linear regressions. My general conclusion from this data is that across the situations I surveyed (which were validated by the users as representing a mix of the three different types of threats), actions inside a social group that threaten the group reputation will increase support for online harassment within a group. These results correspond with the theoretical concept of social identity threat to Group Morality Value (Branscombe 1999) because the questions asked about morally relevant topics like politics. In other words:

Social Identity Threats to Group Reputation motivate increased harassment inside online communities.

What Else Motivated Harassment?

As I mentioned in Part 1, other research has shown that demographic factors like gender help explain the motivation for online harassment so I wanted to know the importance of those factors compared to the threat factors discussed above. To investigate this I asked participants about their age, gender identity, and where they grew up and added those factors to the hierarchical regression models. Participant age and place of origin were not significantly useful in predicting support for online harassment, but male gender identity did significantly predict higher support for online harassment (coefficient 0.27, p < 0.001) confirming earlier research (Maass 2003) and a lifetime of anecdotal observations. This means that self-reported gender identity and the perception of threat to group reputation were roughly equal in importance in predicting the level of support for online harassment.

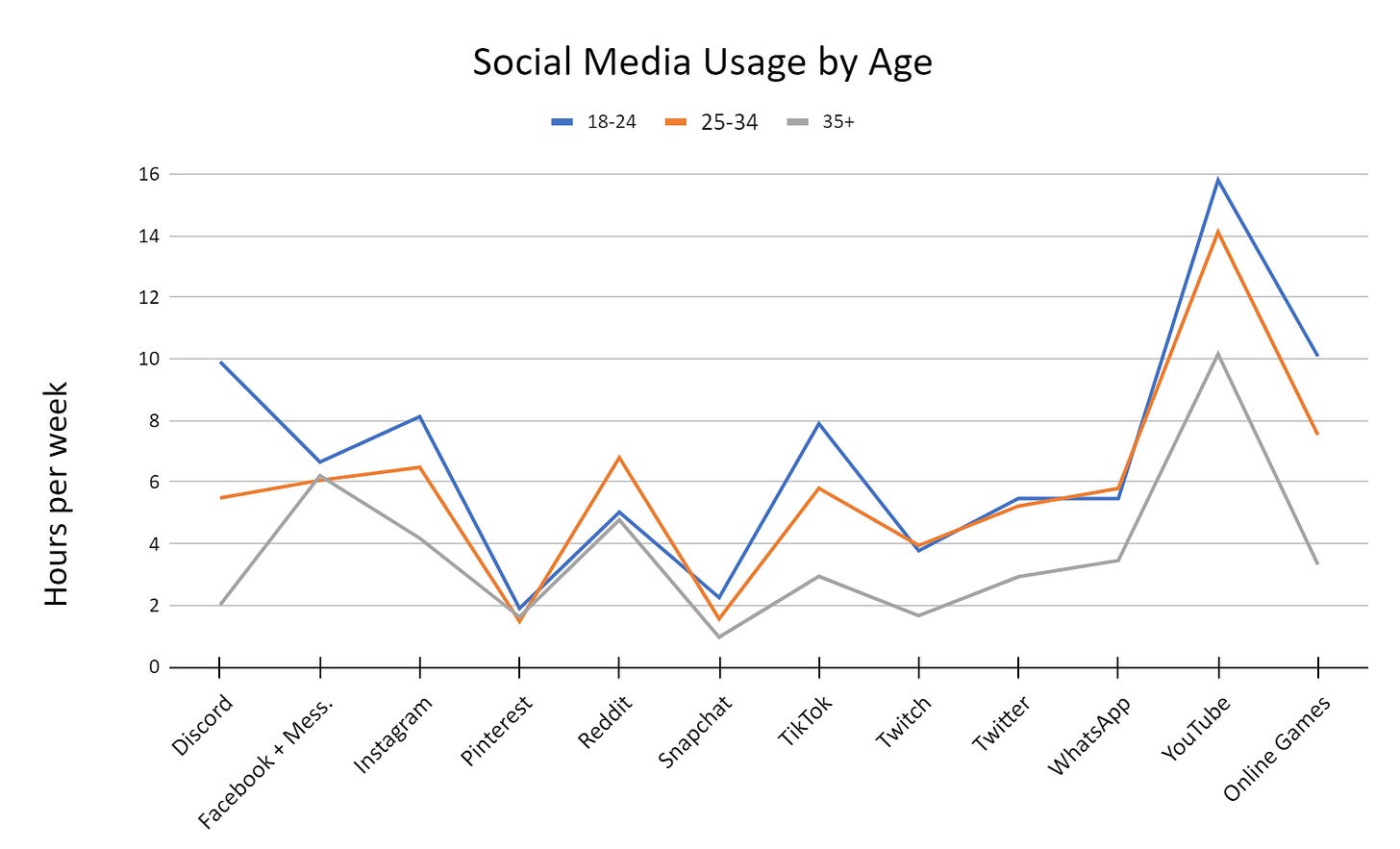

I also asked participants to report how many hours per week they used different internet services. I first used this data to filter out the results of participants who reported very low internet usage or entered apparently random data. After filtering, the data showed that internet users have highly variable patterns of internet use but I was able to gather some interesting demographic trends. As the chart below shows, younger users spent way more time using services like Discord and TikTok:

After adding this usage data to the hierarchical regressions, only a few factors were useful in predicting support for online harassment. Increases in the number of hours spent playing online games and in the total number of online services used predicted small but significant increases in support for harassment. In contrast, the number of hours spent using Reddit and Telegram predicted small decreases in support for harassment. Given personal experience and prior research it is not surprising that heavy gamers were more likely to support online harassment, but the effects of all of these usage factors were much smaller than the gender and reputation factors described above. This could be an interesting area for future research.

What Does This All Mean?

Before I started the data analysis, I expected that increases in threats to group usefulness and group reputation would both increase support for online harassment inside that group. So, I was surprised to see that threats to group reputation were much more important than threats to group usefulness. At least in the situations I asked about, participants were much more likely to support harassment of group members who made the group look worse in the eyes of other people than they were to support harassment of members who made the group less useful.

More research is required to determine if this is only an artifact of the specific survey questions used in this study, but in general these results support the core concept of social identity theory: We care a lot about the perceived value of groups that we belong to. In fact we probably care more about the abstract value (moral, aesthetic, and reputational) associated with group identities than we do about the ability of groups to actually get things done. The results from my thesis provide more empirical evidence that social identities are important psychological constructs that help to explain real human behavior.

These results also have practical implications for those of us who design and implement internet social systems and multiplayer games. This is important for programmers, UX designers, executives, and anyone else who cares about the well-being of others: Unless you want everyone to be feel depressed and angry when they experience online harassment, you should work to minimize unnecessary threats to the shared reputation of online groups. I plan to expand upon this point in a future post where I can provide more speculative suggestions.

Should You Listen To Me?

This is where I acknowledge my own limitations as a researcher and possible issues with the results and conclusions. Because I am not a statistics expert, I used a fairly simple analysis strategy of hierarchical multiple linear regression. I used SPSS for analysis because I learned about it in my graduate classes but I wish I had used R instead. SPSS is difficult to use and would often freeze up and delete my progress. The theoretically correct way to do this analysis would probably be to use a true multilevel model that differentiates participant-level variance from question-level variance. Despite these issues, I am confident that my research has detected a real effect that should replicate using different participants and survey questions.

Although I am not fully confident in my analysis strategy, I am confident in the general procedure and quality of the collected data so I have uploaded the raw data from the main survey and my appendices as excel files attached to the thesis for anyone who is interested. I am not currently planning to directly follow up on this research but the knowledge and skills I gathered during this process will definitely inform my future technical and design work with online communities. Hopefully the information in these posts and my thesis as a whole will serve as the foundation or inspiration for someone else who wants to investigate the real causes of online harassment and other important social behavior. If you have any questions about the process or results of this thesis, you can either leave a comment below or email me directly at benzeigler at gmail dot com.